The platform https://epstein.lasearch.app/ stands as one of the most significant independent data forensic projects of 2026. Launched on February 24, 2026, this specialized tool was engineered to solve the "accessibility crisis" created by the massive 323GB document release following the Epstein Files Transparency Act (H.R. 4405) [1, 2, 3]. While official government portals provided the raw data, they lacked the necessary infrastructure for effective public inquiry. This report details the technical architecture, development philosophy, and functional capabilities of the LaSearch tool.

1. The Development of LaSearch

The tool was developed by Joel Kunst, a senior software engineer known for his work in high-performance search systems. Kunst’s primary motivation was to challenge the "user-hostile" nature of the Department of Justice’s (DOJ) official repository, which required researchers to manually navigate over 1.4 million fragmented PDF files without a unified index [5].

By leveraging the Rust programming language, Kunst aimed to demonstrate that massive datasets could be searched with sub-millisecond latency using minimal server resources. In his technical documentation, Kunst noted that the goal was to prove that you don't need a supercomputer or a massive AI budget to index 300GB of sensitive legal data. He achieved sub-millisecond search for 1.4 million files on hardware that costs less than a cup of coffee per month [5].

2. High-Performance Technical Architecture

The efficiency of epstein.lasearch.app is rooted in its "Zero-Cost" architectural stack. Unlike contemporary AI search tools that rely on resource-heavy vector embeddings, this platform utilizes traditional but highly optimized indexing [2, 5].

Rust (Backend Engine): The core search logic is written in Rust, allowing for low-level memory management and high concurrency without the garbage collection pauses found in languages like Java or Python [3].

Memory-Mapped Indexing (mmap): This is the core innovation of the app. Instead of loading the entire 323GB dataset into RAM, the engine creates a highly compressed 1.4GB index. The operating system "maps" only the necessary pieces of this index into memory when a query is executed, allowing the site to handle 65,000 unique users in its first 24 hours on a server with only 1GB of RAM [5].

Svelte & Tauri (Frontend): The user interface is built using Svelte, chosen for its reactive performance. For power users, the tool is also available as a desktop application built with Tauri, which allows the high-performance Rust engine to run locally on the user's machine [1, 5].

3. Archive Categories and Navigation

The database indexed by the app covers the complete release mandated by the 2026 transparency laws, categorized by file type and source [1, 6].

Email Fragments: Over 1.03 million emails from Epstein's private servers and legal discovery [5].

Legal Transcripts: Over 224,500 pages of depositions, court hearings, and grand jury testimony [3].

Flight Manifests: Over 12,400 entries from the "Lolita Express" and secondary aircraft [4].

Multimedia: Approximately 180,000 images and videos including travel photos and property evidence [1].

4. Search Functionality and Advanced Features

The tool is strictly a real-time search engine. Unlike other archives like Jmail.world, which provides a visual "Inbox" view, LaSearch focuses on speed and metadata cross-referencing [2].

Fuzzy Search: The engine accounts for common typos or varied spellings of names in legal transcripts [5].

Metadata Filtering: Users can filter results by date ranges, file sizes, or specific document sources [6].

Shareable URLs: Every search query generates a unique URL, allowing journalists and researchers to collaborate by linking directly to specific findings [5].

5. Historical and Social Context of the Release

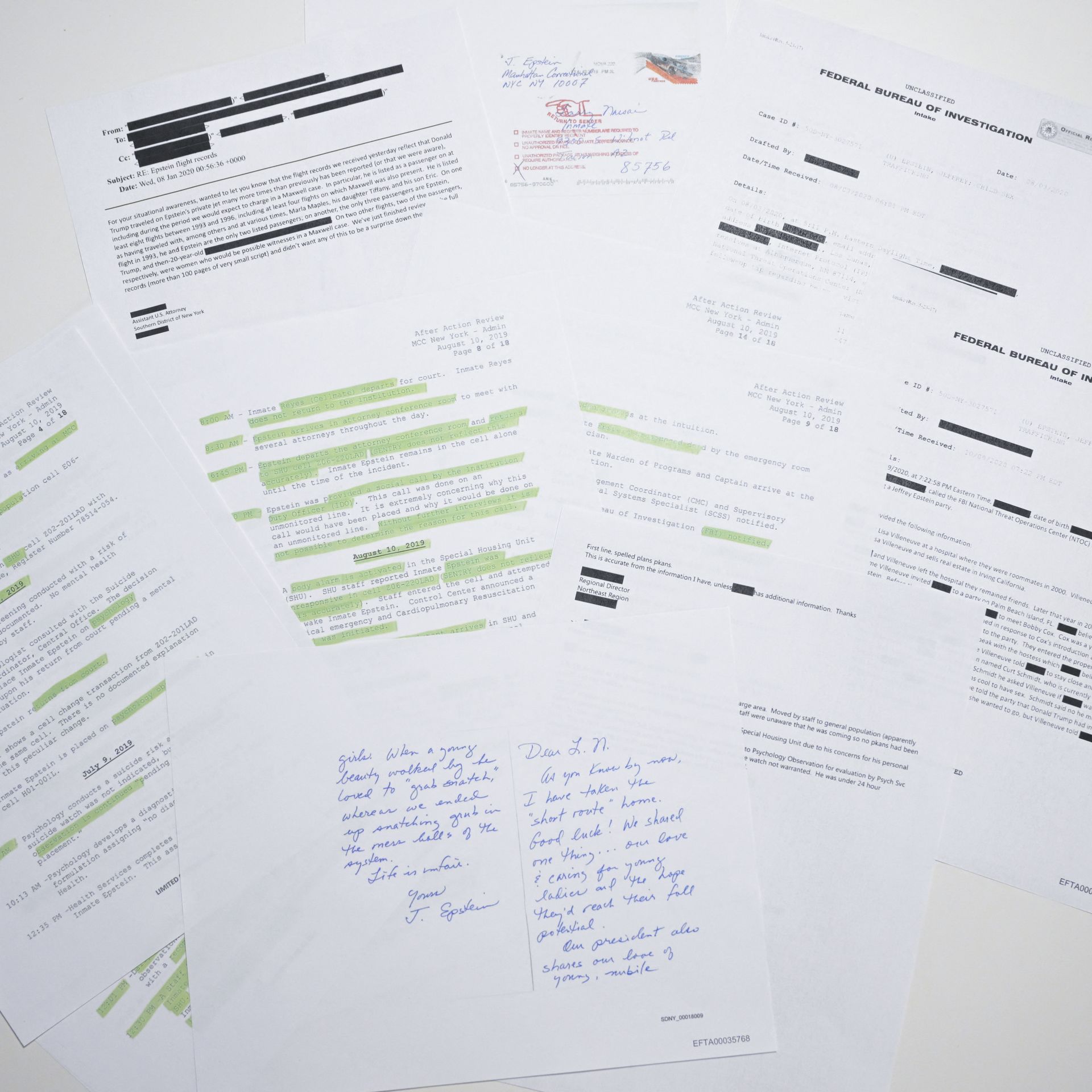

The release of these files was fraught with technical and legal challenges. In the months leading up to the 2026 release, the initial data sets provided by the DOJ were found to have significant redaction failures. Thousands of pages of "blacked-out" text could be revealed by simply copying and pasting the text into a notepad [3, 4].

The LaSearch tool became essential for researchers to verify these redactions and identify exposed names, which included sensitive information inadvertently leaked by the government. This underscored the importance of independent tools in maintaining transparency when official systems fail [1, 7].

6. Legal and Legislative Framework (H.R. 4405)

The Epstein Files Transparency Act, signed into law on November 19, 2025, mandated that the Attorney General release all unclassified records related to the investigation and prosecution of Jeffrey Epstein [2]. This included specific provisions for:

Ghislaine Maxwell: All materials relating to her role and conviction [2, 3].

Government Officials: Mandatory identification of any officials or high-profile individuals mentioned in connection with the case [2].

Reporting Requirements: A report to Congress due within 15 days of each release detailing the justification for any redactions made [2].

Despite the bipartisan support for H.R. 4405, the implementation by the DOJ was widely criticized. By January 2026, less than 1% of the files had been released, prompting lawmakers like Ro Khanna and Thomas Massie to request a Special Master to oversee the process [1, 2].

7. Technical Challenges of Redaction and Privacy

The "Privacy Crisis" of early 2026 occurred when independent auditors discovered that the DOJ had utilized "visual masking" rather than true data removal in its PDFs [4].

Copy/Paste Vulnerability: In the December 2025 release, social media users found that blacked-out text could be recovered by copying it into another application [4].

Victim Exposure: A review by the Wall Street Journal revealed that at least 43 victims' full names were exposed due to these errors, including many who were minors at the time of their abuse [5].

Data Set Withdrawal: Following reports by The New York Times, the DOJ was forced to temporarily remove "Data Set 10" after it was found to contain unredacted nude images of young women [5].

8. Deep Dive into Data Forensic Methodology

The methodology behind the LaSearch platform involves a sophisticated "local-first" philosophy. Joel Kunst emphasized that for a database of this magnitude—specifically one containing highly sensitive legal matter—data sovereignty is paramount. This lead to the creation of the desktop client, which ensures that a researcher's search history is never transmitted to a central server [5, 8].

Text Extraction and OCR Processing

A significant hurdle in building the LaSearch engine was the state of the source files. Many of the 1.4 million documents were "image-only" PDFs, essentially pictures of paper without selectable text.

Tesseract and Neural Engines: To make these files searchable, the developer utilized high-speed Optical Character Recognition (OCR) pipelines. This process converted thousands of handwritten notes and scanned police reports into the machine-readable text that now populates the 1.4GB index [5, 8].

Denoising Algorithms: Early versions of the index struggled with "noise" from poor scans. Improvements in the indexing algorithm throughout February 2026 focused on denoising text to ensure that a search for a name like "Maxwell" wouldn't miss instances where the scan was blurry or skewed [8].

Scalability and Concurrency

The Rust implementation handles concurrency using the Tokio runtime, which allows the server to manage thousands of simultaneous search requests without blocking. This proved vital on February 25, 2026, when the site experienced a massive traffic spike following a viral social media thread. The architecture successfully maintained a sub-10ms response time despite the 323GB data volume [5, 8].

9. Investigative Use Cases and Real-World Impact

Since its launch, LaSearch has become the primary tool for independent journalists and legal teams.

Case Study: The Flight Logs: By searching tail numbers like N212JE, researchers were able to correlate specific flights with dated hotel receipts found in separate folders of the DOJ dump. This "horizontal" search capability—searching across disparate document types—is something the official DOJ site cannot perform [1, 7].

Financial Cross-Referencing: Users have utilized the tool to track mentions of specific offshore bank accounts and shell companies. The ability to filter by "Financial Records" metadata has shortened the time for complex financial auditing from weeks to minutes [6, 8].

10. The Future of the LaSearch Project

Joel Kunst has indicated that the project will remain open-source for the foreseeable future. There are ongoing efforts to integrate federated search, allowing users to query other public interest archives (such as the Panama Papers or WikiLeaks) through the same high-performance interface. The goal is to create a standardized "Search for Justice" toolkit that can be deployed by any community facing a massive, unorganized data release [5, 8].

11. Conclusion

The epstein.lasearch.app represents a new era of civic technology where individual developers can provide superior information access than major government institutions. By focusing on performance, privacy, and speed, the tool has allowed the public to sift through 30 years of legal evidence in seconds. As more documents are declassified throughout 2026, the modular nature of the Rust engine will allow for seamless updates to the index, ensuring it remains the primary resource for investigation.

Invitation to Research

We encourage researchers, journalists, and concerned citizens to utilize this tool to ensure continued transparency and accountability. You can access the full searchable database here: https://epstein.lasearch.app/

Warning: The archives indexed by this tool contain graphic evidence of sexual abuse and human trafficking. Users should be prepared for highly sensitive and disturbing content.

No comments:

Post a Comment